In today’s hyperconnected world, digital platforms shape how people communicate, learn, and express their identities. Yet, as online interactions grow, so does the challenge of creating spaces that are safe, respectful, and inclusive. The goal is not to silence expression but to build environments where meaningful engagement can flourish without the constant threat of harassment, misinformation, or harmful behavior. This article explores the core problems behind unhealthy digital spaces and proposes practical solutions that platforms can implement to build safer and healthier online ecosystems.

The Rising Challenges of Digital Spaces

Online platforms have become integral to daily life—used for entertainment, education, work, shopping, and social interaction. However, the large volume of content and diversity of users create an environment where chaos can quickly emerge if left unmanaged. Toxic behavior, cyberbullying, false information, and extremist content often gain visibility faster than constructive interactions.

One major challenge lies in scale. Millions of posts are uploaded every minute, making manual review impossible. Unfiltered user-generated content can quickly lead to chaos if there are no clear rules governing behavior. Another challenge comes from anonymity. While it protects privacy and encourages free expression, it also emboldens users to act without accountability.

These issues contribute to a widespread loss of trust. Users may disengage or even abandon platforms when they feel unsafe, overwhelmed, or targeted. Organizations managing digital communities must therefore address the root of the problem: how to encourage positive interaction while reducing the potential for harm.

Why Healthy Digital Spaces Matter

Healthy online environments are not simply about preventing abuse; they are about promoting well-being and community. When platforms enable respectful dialogue, users are more likely to participate actively and positively. Environments that encourage safety tend to have higher engagement and lower churn. Brands and creators also benefit from increased credibility and loyalty when users feel protected.

Moreover, the societal impact cannot be ignored. Platforms play a significant role in shaping public discourse. When negativity dominates, trust erodes, and polarization increases. Conversely, when platforms encourage empathy and collaboration, they contribute to a more informed and connected society. In other words, healthy digital spaces are not just a technological goal—but a cultural necessity.

The Need for Clear Guidelines

To protect users and nurture healthy interactions, platforms must first establish clear community standards. These guidelines should define acceptable behavior, outline consequences for violations, and set the tone for respectful participation. Without transparent policies, both users and moderators struggle to know where the boundaries lie.

Effective guidelines are not static—they evolve with emerging trends and risks. For instance, misinformation, hate speech, or deepfake content may require new policy adaptations. When rules are clearly communicated and consistently applied, users are more likely to follow them, and moderators can act with confidence.

Community guidelines should be written in accessible language, avoiding overly technical or legal terms. This ensures that users understand their rights and responsibilities. A strong foundation of rules is the first step toward building a supportive and trustworthy digital environment.

The Role of Technology in Monitoring Content

As digital spaces grow in size, technology becomes essential. Automated systems can detect harmful behavior at scale, flagging content before it spreads. Platforms increasingly rely on content moderation solutions to reinforce safety rules. These include tools that analyze text, images, videos, and user behavior patterns to identify suspicious activity.

While technology cannot replace human judgment, it can greatly reduce the workload of moderators. Automatic detection can address repetitive or high-volume issues, such as spam, explicit content, or hate speech. It can also provide risk scores, helping moderators prioritize critical cases. This hybrid approach enhances efficiency while still allowing human experts to make final decisions.

Real-time monitoring is especially valuable. Harmful content spreads quickly, and delayed action can escalate tensions or expose users to danger. Modern systems can intervene early—sometimes even before a post is published—to prevent risks.

Encouraging Positive Participation

Content moderation is not only about removing harmful material; it’s also about empowering users to contribute responsibly. Platforms can nurture healthy digital spaces by promoting positive engagement rather than only policing negative behavior.

Features such as reaction prompts, informative comments, discussion starters, or behavior reminders can help shape how users interact. Platforms can also highlight constructive posts and provide community recognition for respectful contributions.

Educational nudges are another effective tool. When users attempt to post something potentially harmful, a prompt asking them to reconsider often reduces the likelihood of publishing inflammatory content. These proactive measures turn moderation into guidance rather than punishment, encouraging users to grow rather than simply comply.

Building Trust Through Transparency

Trust is a cornerstone of any thriving digital community. Users need to know how moderation works and why decisions are made. Platforms that explain their moderation process—without revealing confidential systems—tend to gain stronger credibility.

Transparency reports, user feedback tools, and appeal mechanisms allow individuals to feel heard and acknowledged. When users understand that decisions are not arbitrary, they are more likely to cooperate with the rules and respect the system.

Acknowledging mistakes is also important. No platform is perfect, and moderation decisions can be complex. Apologizing for errors and updating policies accordingly can strengthen long-term trust and accountability.

Human Moderators Still Matter

Technology offers powerful tools, but human moderators bring empathy and contextual understanding that machines lack. They can recognize nuance, cultural references, sarcasm, and emotional tone. The key is to combine automated efficiency with human sensitivity.

However, moderation work can be demanding. Exposure to harmful content may affect mental health, leading to burnout or fatigue. Platforms should prioritize moderator well-being by offering training, psychological support, and appropriate workload distribution.

A balanced model—where machines filter large volumes of data and humans manage complex cases—can lead to more consistent and responsible moderation.

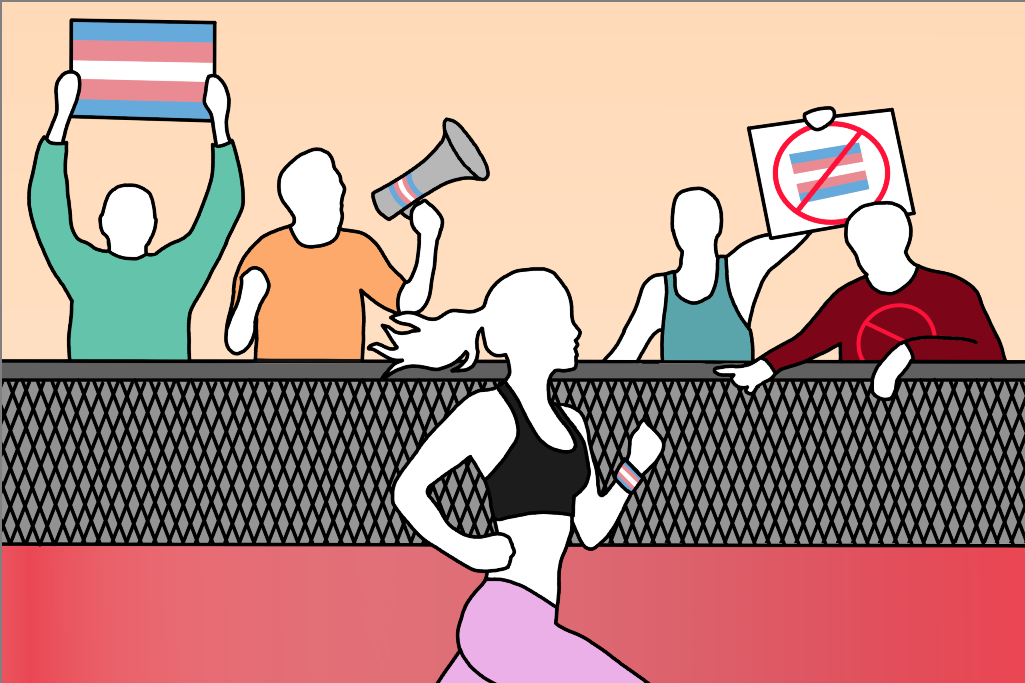

Creating Inclusive Environments

Healthy digital spaces must represent diverse voices and perspectives. Inclusive platforms allow users from different backgrounds to feel welcome and respected. This requires policies against harassment, discrimination, and targeted abuse.

Providing accessibility features is another essential step. Options like voice navigation, closed captions, and high-contrast modes ensure that users with different abilities can move and participate freely. Inclusion is not just ethical but also beneficial: more user participation leads to richer conversations and deeper engagement.

The Importance of User Empowerment

Users should feel they have control over their digital experience. Platforms can offer tools such as comment filters, blocking features, or visibility controls, allowing individuals to shape their surroundings according to personal comfort levels.

Empowering users also reduces the pressure on moderation teams. When people can protect themselves using built-in tools, they become active participants in preserving a safe digital community. This collaborative model creates shared responsibility—platforms set the structure, and users help maintain it.

Implementing Scalable Solutions

As platforms grow, scalability becomes a major concern. Manual moderation alone will not sustain large digital communities. Adopting a content moderation platform helps centralize processes, organize workflows, and integrate both human and automated tools.

Scalable systems allow multiple teams to collaborate, maintain detailed records, and adapt to new challenges. Real-time collaboration, rule-based actions, and multi-language support make large-scale moderation manageable.

A platform designed for growth promotes stability and reduces mistakes. As user-generated content increases, such infrastructure becomes essential for preventing disorder and protecting the integrity of online spaces.

A Vision for the Future

The digital world is evolving quickly, and platforms must evolve with it. The future of healthy digital spaces depends on innovation, responsibility, and user-centered design. Artificial intelligence will continue to improve, and ethical standards will grow stronger.

The true objective is not total control but balance. Free expression must coexist with safety. Open dialogue must be encouraged without enabling harm. Collaboration between users, moderators, developers, and policymakers will shape the digital communities of tomorrow.

Healthy digital spaces are achievable. With trust, guidance, and shared responsibility, platforms can create environments where users feel safe to participate, explore, and connect—without fear of hostility or misinformation.